For example, for post-processing purposes, you might need only a subset of variables.

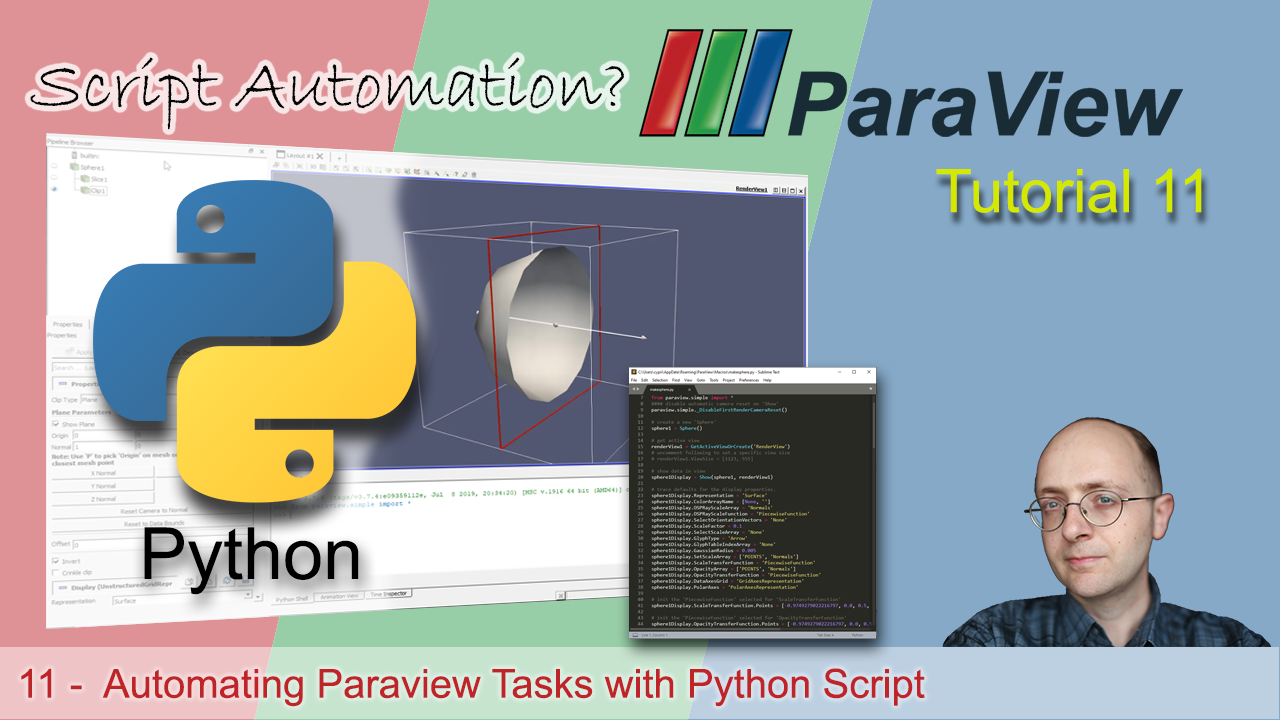

In OpenFOAM, you can control which variables or fields are written at specific times. For details see:ĥ Using I/O and reducing the amount of data and files If you are using an OpenFOAM version wich comes with pre-installed Intel MPI (like, for example cae/openfoam/v1712-impi) you will have to modify the batch script to use all the advantages of Intel MPI for parallel calculations. Mpirun $ -parallel &Īttention: The script above will run a parallel OpenFOAM Job with pre-installed OpenMPI. Name of the solver is given in the "EXECUTABLE" variable # remove decomposePar if you already decomposed your case beforehandĭecomposeParHPC & # starting the solver in parallel. #!/bin/bash # Allocate nodes #SBATCH -nodes=2 # Number of tasks per node #SBATCH -ntasks-per-node=40 # Queue class #SBATCH -partition=multiple # Maximum job run time #SBATCH -time=4:00:00 # Give the job a reasonable name #SBATCH -job-name=openfoam # File name for standard output (%j will be replaced by job id) #SBATCH -output=logs-%j.out # File name for error output #SBATCH -error=logs-%j.err # User defined variables FOAM_VERSION = "8" EXECUTABLE = "icoFoam" MPIRUN_OPTIONS = "-bind-to core -map-by core -report-bindings" If you want your mesh to be divided in other way, specifying the number of segments it should be cut in x, y or z direction, for example, you can use "simple" or "hierarchical" methods.Īttention: openfoam module loads automatically the necessary openmpi module for parallel run, do NOT load another version of mpi, as it may conflict with the loaded openfoam version.Ī job-script to submit a batch job called job_openfoam.sh that runs icoFoam solver with OpenFoam version 8, on 80 processors, on a multiple partition with a total wall clock time of 6 hours looks like: It trims the mesh, collecting as many cells as possible per processor, trying to avoid having empty segments or segments with not enough cells. The automatic decomposition method is " scotch". The number of subdomains, in which the geometry will be decomposed, is specified in " system/decomposeParDict", as well as the decomposition method to use. There is, of course, a mechanism that connects properly the calculations, so you don't loose your data or generate wrong results.ĭecomposition and segments building process is handled by decomposeParutility. Then, you start the solver in parallel, letting OpenFOAM to run calculations concurrently on these segments, one processor responding for one segment of the mesh, sharing the data with all other processors in between. That means, for example, if you want to run a case on 8 processors, you will have to decompose the mesh in 8 segments, first. $ cat $HOME/.ssh/id_rsa.pub > $HOME/.ssh/authorized_keysĤ Building an OpenFOAM batch file for parallel processing 4.1 General informationīefore running OpenFOAM jobs in parallel, it is necessary to decompose the geometry domain into segments, equal to the number of processors (or threads) you intend to use. $ mpirun -bind-to core -map-by core -report-bindings snappyHexMesh -overwrite -parallelįor running jobs on multiple nodes, OpenFOAM needs passwordless communication between the nodes, to copy data into the local folders.Ī small trick using ssh-keygen once will let your nodes to communicate freely over rsh.ĭo it once (if you didn't do it already in the past): As the data will be processed directly on the nodes, and may be lost if the job is cancelled before the data is copied back into the case folder.įor example, if you want to run snappyHexMeshin parallel, you may use the following commands: Therefore you may use *HPC scripts, wich will copy your data to the node specific folders after running the decomposePar, and copy it back to the local case folder before running reconstructPar.ĭon't forget to allocate enough wall-time for decomposition and reconstruction of your cases. OpenFOAM (Open-source Field Operation And Manipulation) is a free, open-source CFD software package with an extensive range of features to solve anything from complex fluid flows involving chemical reactions, turbulence and heat transfer, to solid dynamics and electromagnetics.Īfter loading the desired module, type to activate the OpenFOAM applicationsįor a better performance on running OpenFOAM jobs in parallel on bwUniCluster, it is recommended to have the decomposed data in local folders on each node. 6 OpenFOAM and ParaView on bwUniCluster.5 Using I/O and reducing the amount of data and files.4 Building an OpenFOAM batch file for parallel processing.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed